Knowledge Tracing with Contrastive Learning

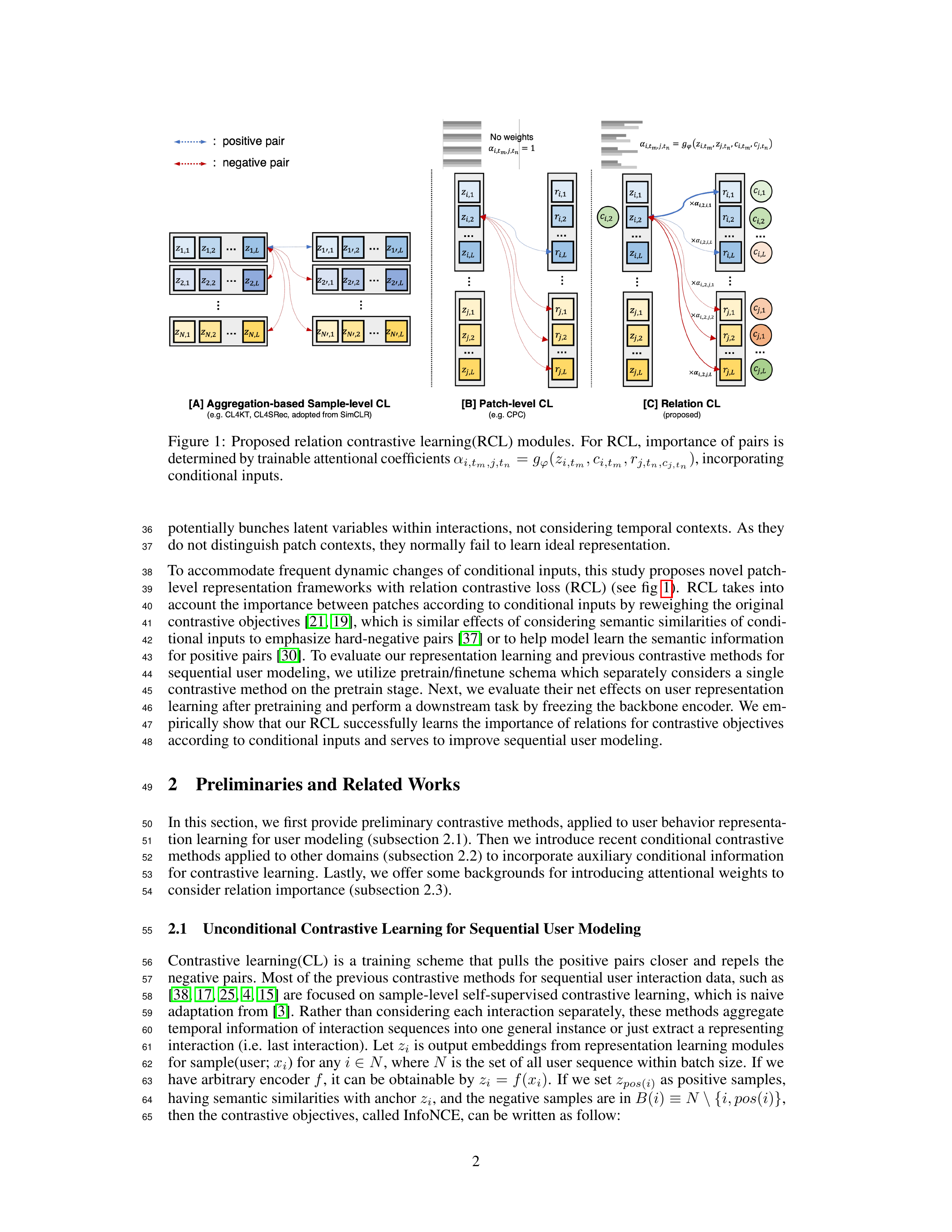

Proposed ACCL and RCL contrastive learning methods at RIIID, achieving state-of-the-art on student modeling across 6 benchmarks (dropout prediction, knowledge tracing). Deployed to Santa TOEIC platform.

Proposed ACCL and RCL contrastive learning methods at RIIID, achieving state-of-the-art on student modeling across 6 benchmarks (dropout prediction, knowledge tracing). Deployed to Santa TOEIC platform.

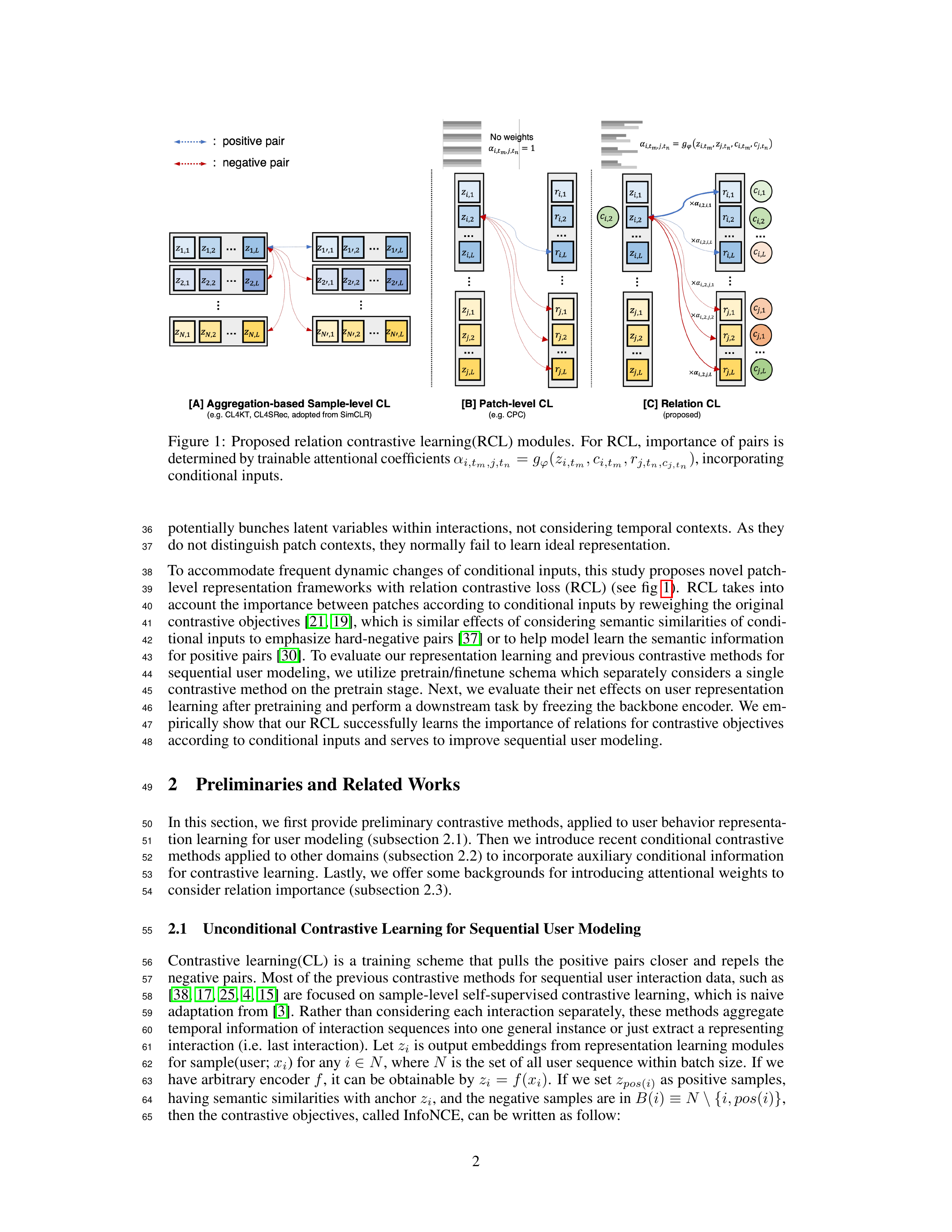

Built ML model registry (MLFlow) and dataset pipelines (Airflow, Athena, BigQuery) at RIIID, serving 4+ products including SANTA TOEIC, IVYGlobal SAT, CASA GRANDE, and INICIE.

Introduced RIIID’s first multi-GPU training, boosting GPU utilization from 25% to 95% and cutting initialization time from 1 hour to 10 seconds. Built CI/CD pipelines with GitHub Actions.