Overview

OHouseAI is an AI-powered interior design generation service at Bucketplace (오늘의집). I led the systematization of the GenAI workflow, modularizing the architecture into a Pipeline Provider + Subgraph pattern using LangGraph. The service reached Korea #8 ranking in the Graphics/Design category.

Key Achievements

- Net Satisfaction Score (NSS): +253%

- Positive-Negative Ratio (PN): +205%

- User Engagement: Requests per user increased by 2.3x

- Retention: Improved from 7% to 14%

- Latency: Reduced from 71.7s to 35.7s

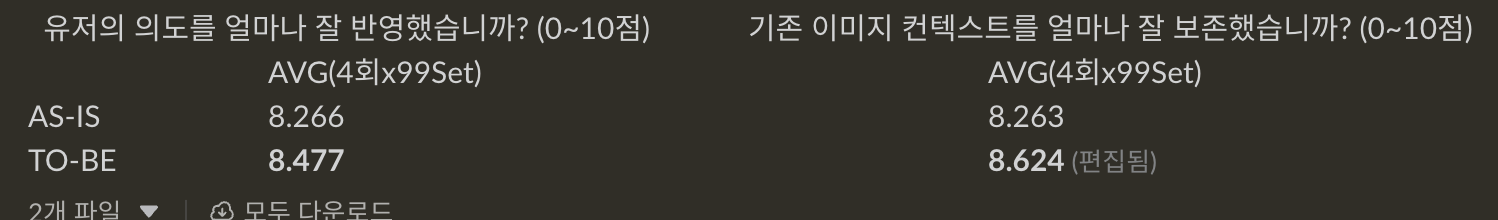

- Model Quality: Outperformed GPT-IMAGE-1.5 and Gemini Nano (8.3/10 intent reflection, 8.1/10 context preservation)

Technical Approach

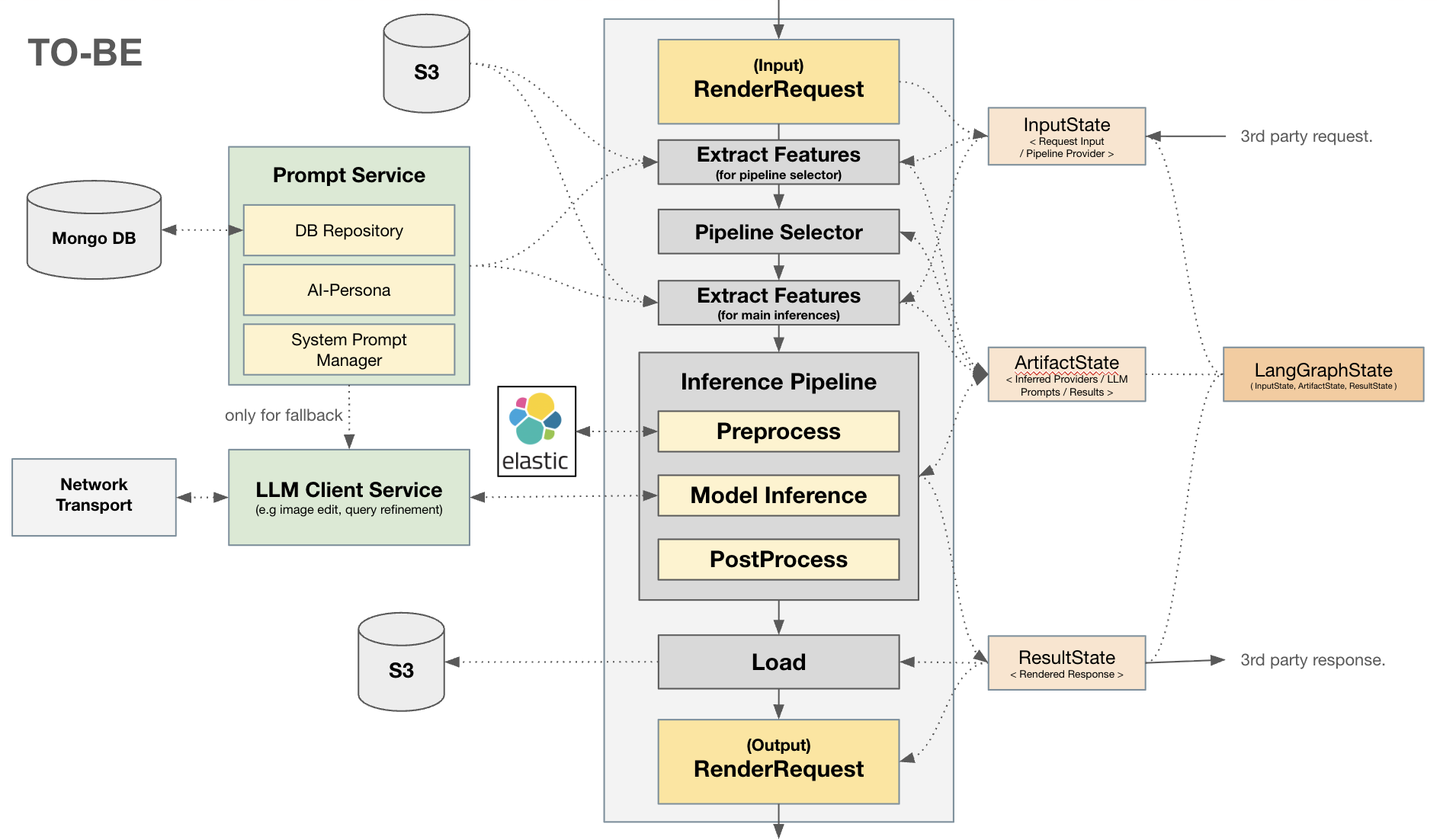

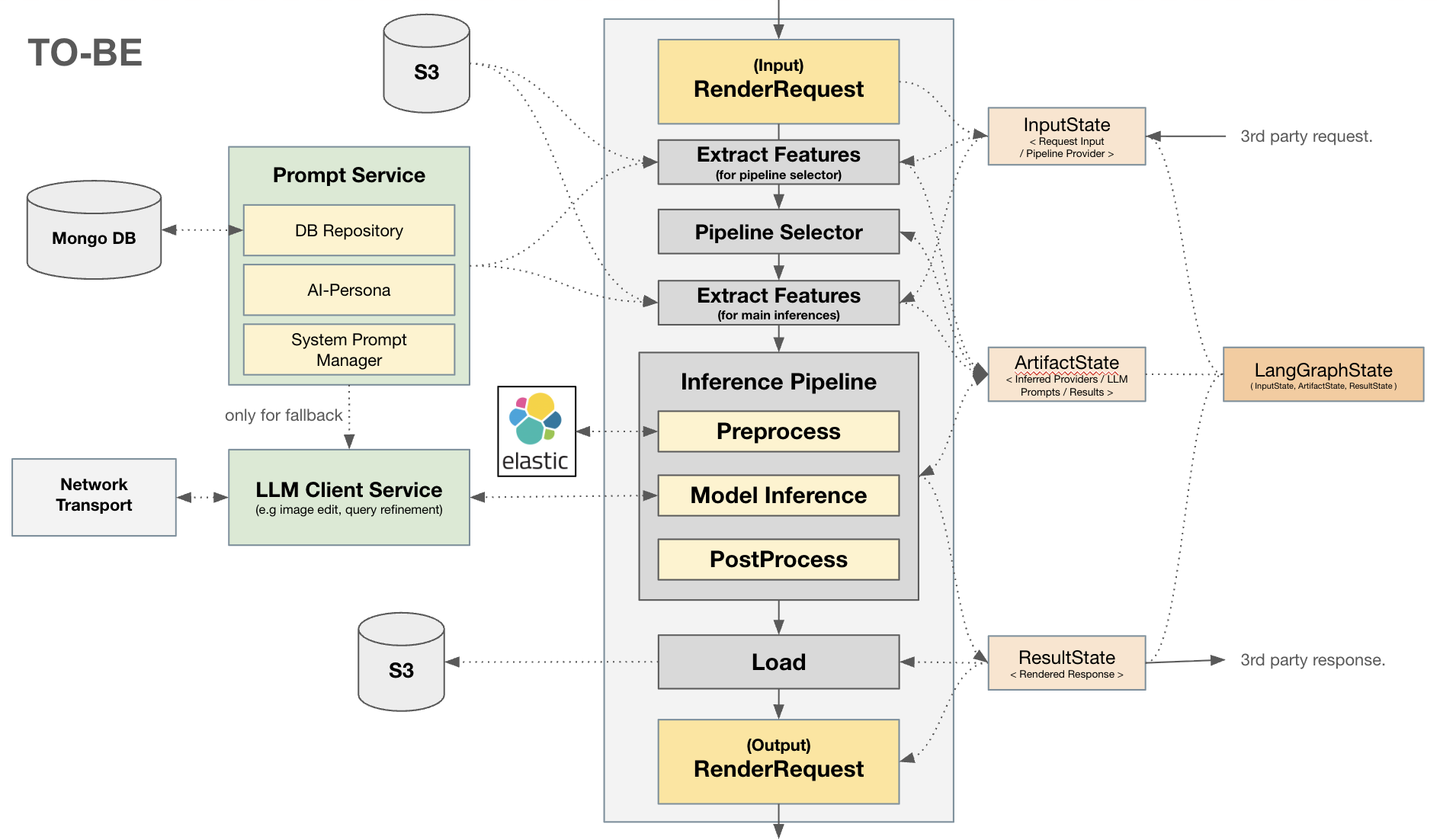

Pipeline Provider + Subgraph Architecture

Modularized the GenAI workflow into composable components using LangGraph:

- Pipeline Provider: Centralized orchestration layer managing the lifecycle of generation requests

- Subgraph Pattern: Each generation capability is implemented as an independent subgraph, enabling independent iteration

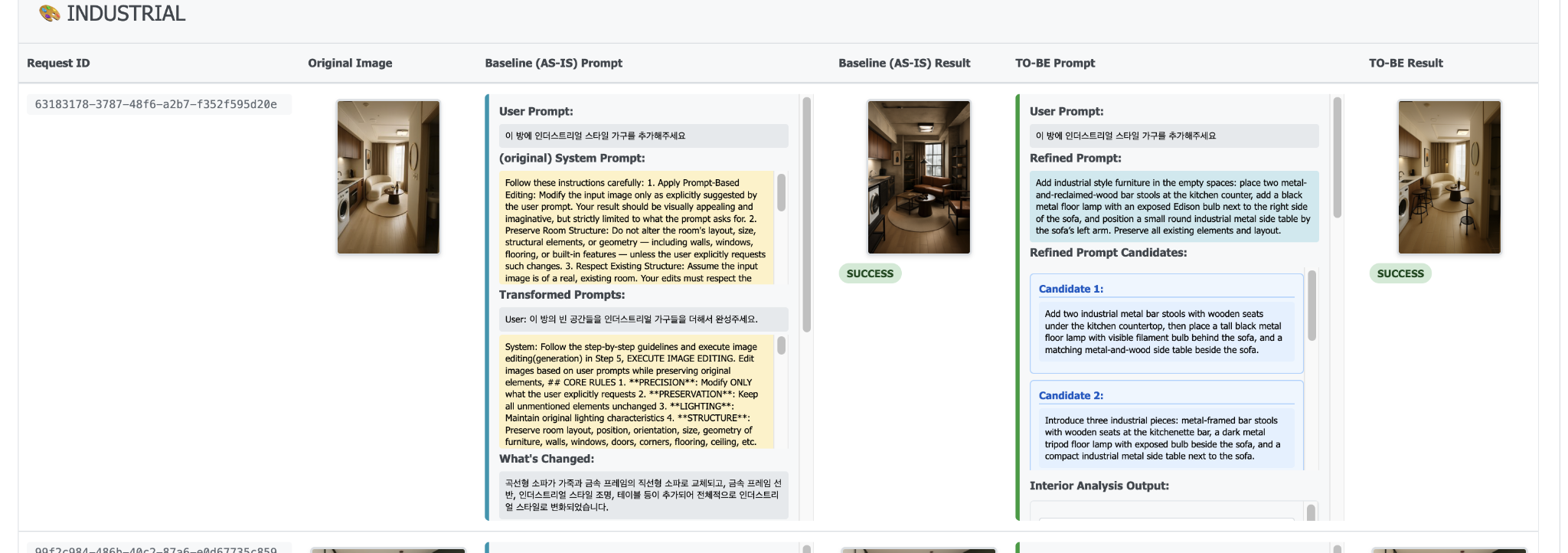

LLM-as-a-Judge Evaluation Pipeline

Built a batch evaluation pipeline for data-driven model selection:

- Scale: 4 evaluation rounds x 99 test sets

- Methodology: Automated side-by-side comparisons with structured scoring:

- Intent Reflection: How well the output matches the user’s design intent

- Context Preservation: How faithfully the output preserves the original context

- Application: Used to compare candidate models (including GPT-IMAGE-1.5 and Gemini Nano) for production model selection

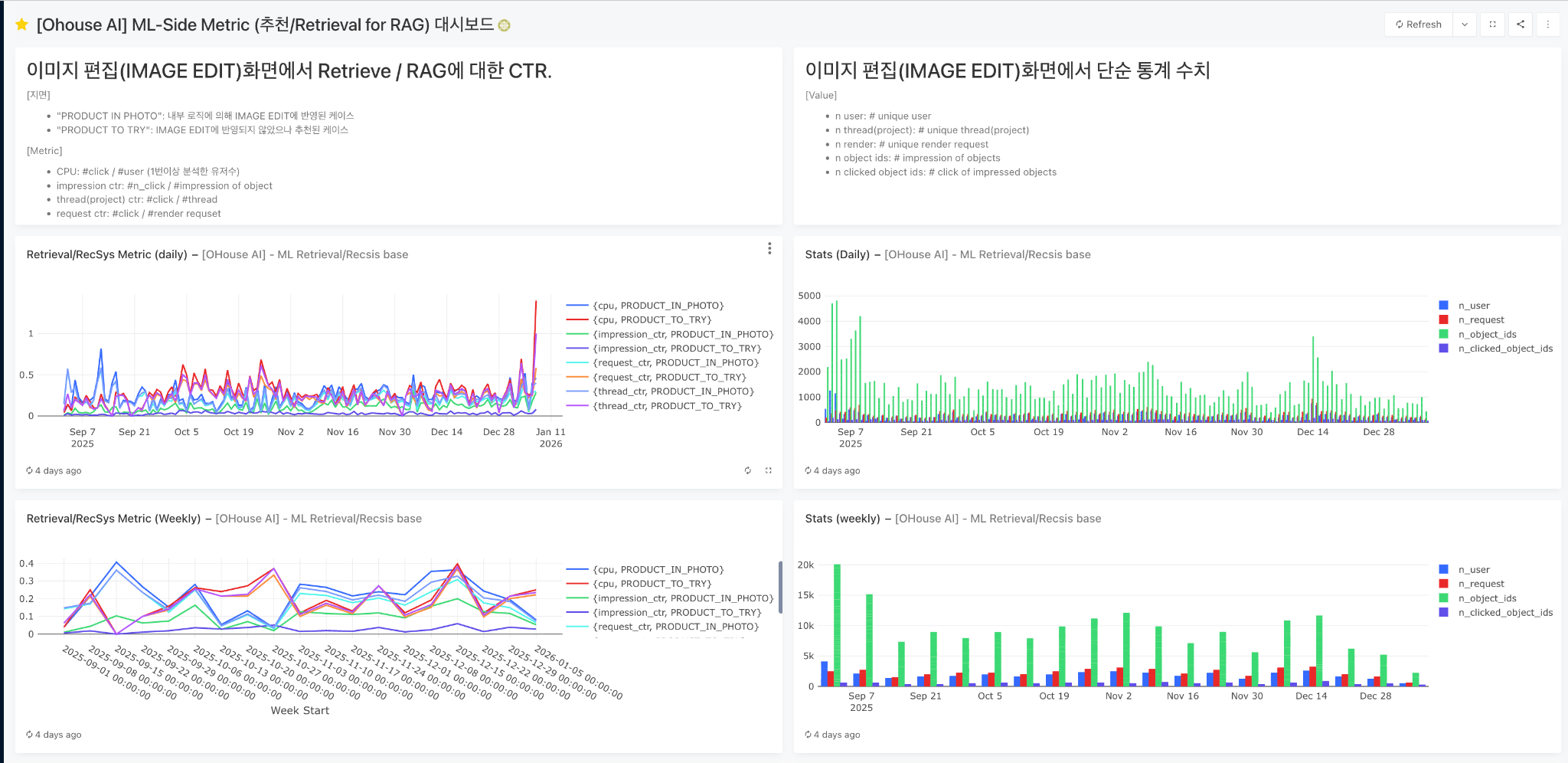

Monitoring and Observability

Production monitoring through LangFuse for end-to-end tracing of the generation pipeline.

Architecture Systematization

Restructured the existing LangGraph pipeline into a modular Pipeline Provider + Subgraph pattern:

- Before: Monolithic LangGraph graph handling all generation types in a single flow

- After: Composable subgraphs per generation capability, orchestrated by a centralized Pipeline Provider with checkpointed state, Redis persistence, and MongoDB storage

- Benefits: Independent iteration on each generation type, modular prompt management, structured request monitoring, and performance evaluation

Tech Stack

- Agent/Workflow Framework: LangChain, LangGraph

- Backend: FastAPI, Kafka

- Storage: MongoDB (domain objects), Redis (LangGraph persistence)

- Observability: LangFuse

- Evaluation: LLM-as-a-Judge (4 rounds x 99 test sets)

- Architecture Pattern: Pipeline Provider + Subgraph

Period

July 2025 - November 2025 | Bucketplace (오늘의집)